Watch Your Step(ping): Atoms Breaking Apart

By Mathias Krause

November 30, 2021

Introduction

This blog article highlights some technical details of a CPU bug we observed on a specific Intel Atom CPU. The bug hunting journey was equally educational and frustrating, since the debuggability of the underlying issue was highly limited by the CPU simply misbehaving at an architectural level. None of the processor’s publicly exported (and publicly documented) interfaces shed light on were the root cause could be found. The “recent” rise and public mentioning of similar issues here, here and here and the lost hope in waiting for Intel to comment on the matter was the final momentum that led to finishing this blog to contribute our findings on Intel CPU bugs to the wider community.

Enjoy a highly technical article peppered with lots of crash dumps and disassembly listings, doubling as a case study on the lengths our team goes to to support our customers, even if an issue lies with another vendor.

A Weird Bug Report

Back in September 2020, our attention was brought to an interesting issue one of our customers had on one of their platforms, an “Intel(R) Atom(TM) Processor E3950 @ 1.60GHz”. It was a kernel panic when running a seemingly harmless workload. Strangely enough, the issue occurred only on the newest stepping of that very CPU model. Older steppings and different CPU models worked just fine.

The report included a screenshot that, albeit truncated and lacking the beginning of the Oops message, still contained the register and code dump and the failing RIP. CR2 wasn’t referring to any of the register values – wasn’t even close to any of them – so we guessed it might be a general protection fault, i.e. a memory access to a non-canonical address. Later screenshots confirmed this by having a reference to general_protection() in the Oops stack backtrace. One of the registers also matched the non-canonical condition, so this initially looked like an easy kernel memory corruption bug to hunt down. Further analysis showed otherwise, as decoding the opcode bytes gave us this (trapping instruction is commented):

ffffffff812e938a: e8 51 8f fb ff callq 0xffffffff812a22e0

ffffffff812e938f: 49 89 c1 mov %rax,%r9

ffffffff812e9392: 49 89 c2 mov %rax,%r10

ffffffff812e9395: 49 83 e1 fc and $0xfffffffffffffffc,%r9

ffffffff812e9399: 0f 84 77 02 00 00 je 0xffffffff812e9616

ffffffff812e939f: 49 8b 41 28 mov 0x28(%r9),%rax

ffffffff812e93a3: 48 83 78 40 00 cmpq $0x0,0x40(%rax)

ffffffff812e93a8: 0f 84 74 02 00 00 je 0xffffffff812e9622

ffffffff812e93ae: 83 fb 02 cmp $0x2,%ebx

ffffffff812e93b1: 74 08 je 0xffffffff812e93bb ; trapping instruction

ffffffff812e93b3: 81 64 24 64 ff ff ff andl $0xdfffffff,0x64(%rsp)

ffffffff812e93ba: df

ffffffff812e93bb: 4d 39 ce cmp %r9,%r14

ffffffff812e93be: 0f 84 6a 02 00 00 je 0xffffffff812e962eFrom the RIP value we know that this code sequence was part of the SYSC_epoll_ctl() kernel function, which is implementing the epoll_ctl() system call. That API had multiple bugs in the past, so this could have just been another one. What’s special in the above code listing, however, is that the instruction triggering the general protection fault (or #GP for short) is a conditional jump. Looking up the Intel SDM for the conditions under which a conditional jump can generate a #GP is short:

64-Bit Mode Exceptions

…

#GP(0) If the memory address is in a non-canonical form.

This condition isn’t fulfilled in this case. The jump’s target address is 0xffffffff812e93bb – just 10 bytes past the trapping instruction. And that address is, for sure, a canonical one located in the kernel’s virtual address space. It’s even a mapped one, as we’re able to read and dump its content. So something didn’t add up here.

JCC Erratum Reloaded?

As we got more reports from the customer with different code locations crashing, a certain pattern became visible: all the reports had in common that there was a jump instruction either directly involved or nearby. That rang a bell: SKX102, aka the “JCC Erratum” which Intel summarizes as:

Processor May Behave Unpredictably on Complex Sequence of Conditions Which Involve Branches That Cross 64 Byte Boundaries

This doesn’t quite match what is mentioned in the JCC whitepaper, which speaks about 32 byte boundaries. But even then, it still wouldn’t apply to the above code listing, as the trapping macro fused instruction (cmp+je) is crossing a 16 byte boundary only. Regardless, the involvement of jump instructions in all of the reports we got so far made us look deeper.

Digging through the whitepaper and the Atom specs revealed that the required preconditions for the JCC Erratum wouldn’t apply, as the Atom CPU family lacks the DSB (Decode Stream Buffer, a µops cache). A quick double-check with perf confirmed this as well:

root@buster:~# perf list | grep -i dsb

root@buster:~#Yet that pattern matched just too well to be ignored. Perhaps it was a new, related bug that didn’t need a DSB? So we did further analyses of the kernel binary regarding jump instructions, as these seem to be the trigger somehow.

The kernel was making use of PaX’s RAP feature which adds one unconditional direct jump per call instruction to embed the so called RAP ret hash – a 64 bit identifier at a fixed offset from the instruction following the call instruction, i.e. the return location of the call. It is used to implement the deterministic part of RAP’s backward edge control flow integrity checks.

Short Detour on RAP

Before traveling further down the rabbit hole, trying to find the pattern that triggers the bug conditions, we need to take a short detour explaining RAP. It has evolved significantly since its introduction in 2015 and the last public code release in 2017, but the basic principles haven’t changed.

RAP’s instrumentation nowadays consists of three parts:

RAP_CALL: embeds a RAP call hash in front of functions and emits code that verifies it on indirect calls,

RAP_RET: embeds a RAP ret hash prior to call instructions and emits code that verifies it prior to returning from the callee function and

RAP_XOR: a probabilistic method that protects the on-stack return address by mirroring it in a register that gets XOR’ed with a secret cookie. Prior to returning from a function, the value gets XOR’ed again with the cookie and compared to the on-stack return address. On mismatch, a RAP exception gets raised in form of an invalid opcode exception (#UD).

…and the KERNEXEC compiler plugin

The failing kernel had yet another PaX feature enabled that leads to code instrumentation: the KERNEXEC compiler plugin. In combination with some manual assembly-level instrumentation, it effectively prevents user code execution while in kernel mode by instrumenting all indirect control flow transfers and enforcing that the most significant bit of a to-be-followed function pointer is 1. This scheme either leads to a valid kernel address (if the MSB was 1 already) or a non-canonical address that will trigger a general protection fault. It can be considered a precursor to SMEP, an alternative implementation that doesn’t rely on a control register bit to stay enabled but is a low cost code integrity feature that is “always on.”

A typical code sequence for RAP and the KERNEXEC plugin (here, the BTS variant) for the target kernel looks like this (explanations on the right, mind the scroll bar on the bottom):

ffffffff81003ea0 <smp_spurious_interrupt>:

ffffffff81003ea0: 55 push %rbp ; typical function prologue with CONFIG_FRAME_POINTER=y

ffffffff81003ea1: 48 89 e5 mov %rsp,%rbp

ffffffff81003ea4: 41 57 push %r15

ffffffff81003ea6: 49 bf 00 00 00 00 00 00 00 80 movabs $0x8000000000000000,%r15 ; for KERNEXEC, to set the MSB of function pointers easily

:::

ffffffff81003eb4: 4c 8b 6d 08 mov 0x8(%rbp),%r13 ; RAP_XOR: load on-stack return address into R13

:::

ffffffff81003ebd: 4d 31 e5 xor %r12,%r13 ; RAP_XOR: xor R13 with secret cookie in R12 (a reserved register under RAP_XOR)

:::

ffffffff81003ef7: 4c 09 fe or %r15,%rsi ; KERNEXEC: force MSB of function pointer to be 1

:::

ffffffff81003f00: 48 81 7e f8 59 3b 95 33 cmpq $0x33953b59,-0x8(%rsi) ; RAP_CALL: RSI holds a function pointer. If legitimate, RAP call hash in preceding 8 bytes

ffffffff81003f08: ,---- 0f 85 d4 00 00 00 jne ffffffff81003fe2 ; RAP_CALL: if RAP call hash check fails, jump to RAP trap below

ffffffff81003f0e: | ,-- eb 10 jmp ffffffff81003f20 ; RAP_RET: unconditional jump to skip the RAP ret hash following from executing

ffffffff81003f10: | | cc int3 ; RAP_RET: non-executed instructions, only to "pad" the RAP ret hash

ffffffff81003f11: | | cc int3 ; RAP_RET: same

ffffffff81003f12: | | cc int3 ; RAP_RET: same

ffffffff81003f13: | | 48 b8 a7 c4 6a cc ff ff ff ff movabs $0xffffffffcc6ac4a7,%rax ; RAP_RET: 64 bit RAP RET hash, masked in a MOVABS instruction (to please disassemblers)

ffffffff81003f1d: | | cc int3 ; RAP_RET: more non-executed instructions

ffffffff81003f1e: | | cc int3 ; RAP_RET: same

ffffffff81003f1f: | | cc int3 ; RAP_RET: same

ffffffff81003f20: | `-> 66 66 90 data16 xchg %ax,%ax ; not part of RAP, but our retpoline plugin's asm alternative (external thunk, lfence, etc.)

ffffffff81003f23: | ff d6 callq *%rsi ; indirect call, replaced at runtime via asm alternatives if retpolines are required

ffffffff81003f25: | ba 01 00 00 00 mov $0x1,%edx ; next instruction, exactly 16 bytes after the RAP ret hash

::: :

ffffffff81003fb5: | 48 8b 45 08 mov 0x8(%rbp),%rax ; RAP_XOR: load on-stack return address into RAX

ffffffff81003fb9: | 4d 31 e5 xor %r12,%r13 ; RAP_XOR: undo cookie xor of function prologue

ffffffff81003fbc: | 4c 39 e8 cmp %r13,%rax ; RAP_XOR: compare current return address with the one read on function entry

ffffffff81003fbf: | ,-- 75 1e jne ffffffff81003fdf ; RAP_XOR: jump to RAP trap on mismatch

ffffffff81003fc1: | | 48 81 78 f0 f8 75 5d c0 cmpq $0xffffffffc05d75f8,-0x10(%rax) ; RAP_RET: RAP ret hash check, verifying the caller has a proper hash 16 bytes prior to the

| | ; return location, which should be a "RAP call" like above

ffffffff81003fc9: ,-+-+-- 75 1a jne ffffffff81003fe5 ; RAP_RET: jump to RAP trap on mismatch

ffffffff81003fcb: | | | 5b pop %rbx ; function epilogue starts, restoring register values

ffffffff81003fcc: | | | 41 5d pop %r13

ffffffff81003fce: | | | 41 5e pop %r14

ffffffff81003fd0: | | | 41 5f pop %r15

ffffffff81003fd2: | | | 5d pop %rbp

ffffffff81003fd3: | | | 48 0f ba 2c 24 3f btsq $0x3f,(%rsp) ; KERNEXEC, forcing the MSB of the on-stack return address to be 1

ffffffff81003fd9: | | | c3 retq ; actual function return

ffffffff81003fda: | | | 0f 0b ud2 ; speculation barrier

ffffffff81003fdc: | | | 0f b9 3b ud1 (%rbx),%edi ; RAP hash mismatch trap instructions (will be handled by the #UD handler)

ffffffff81003fdf: | | `-> 0f b9 00 ud1 (%rax),%eax ; ...the one for the RET XOR mismatch

ffffffff81003fe2: | `---> 0f b9 31 ud1 (%rcx),%esi ; ...the one for the indirect call above

ffffffff81003fe5: `-----> 0f b9 00 ud1 (%rax),%eax ; ...one more for the RAP RET mismatch

:::

::: // seeking to a function for the above indirect call //

:::

ffffffff8106a08e: 66 90 xchg %ax,%ax ; inter-function padding, emitted by the assembler

ffffffff8106a090: cc int3 ; RAP_CALL: start of the RAP "call hash" sequence -- won't be executed

ffffffff8106a091: cc int3

ffffffff8106a092: cc int3

ffffffff8106a093: cc int3

ffffffff8106a094: cc int3

ffffffff8106a095: cc int3

ffffffff8106a096: 48 b8 59 3b 95 33 00 00 00 00 movabs $0x33953b59,%rax ; RAP_CALL: RAP call hash embedded in a MOVABS instruction, 8 bytes in front of the

; function symbol (matching the check at 0xffffffff81003f00)

ffffffff8106a0a0 <native_apic_mem_read>: ; actual function starts here

ffffffff8106a0a0: 89 ff mov %edi,%edi

ffffffff8106a0a2: 8b 87 00 d0 5f ff mov -0xa03000(%rdi),%eax

ffffffff8106a0a8: 48 0f ba 2c 24 3f btsq $0x3f,(%rsp) ; KERNEXEC, forcing the MSB of the on-stack return address to be 1

ffffffff8106a0ae: c3 retq

ffffffff8106a0af: 0f 0b ud2 ; speculation barrier

ffffffff8106a0b1: 66 66 2e 0f 1f 84 00 00 00 00 00 data16 nopw %cs:0x0(%rax,%rax,1) ; inter-function padding, again

ffffffff8106a0bc: 0f 1f 40 00 nopl 0x0(%rax)

ffffffff8106a0c0: cc int3 ; RAP call hash sequence for the next function starts

:::The observant reader might notice that RAP puts some additional pressure on the BTB (Branch Target Buffer) as all call instructions are preceded by an unconditional direct jump – a branch instruction with a target that’s yet another branch instruction.

The backward edge check leads to a L1D cache load for comparison of the RAP ret hash. That “data” should already be part of the L1I cache, but needs to be transferred to L1D as it’s not an instruction fetch but a memory load operation (see ffffffff81003fc1 above).

Clearly, a RAP-enabled kernel has more branches than a vanilla kernel, but how many more?

$ for i in vmlinux-*; do printf "%25s: " "$i"; objdump -wdr "$i" | grep 'jmp' | wc -l; done

vmlinux-rap: 224882

vmlinux-vanilla: 79052A significant increase: a RAP-enabled kernel has ~2.8 times more branches than a vanilla kernel.

To check for the conditions of the JCC Erratum, we’re only interested in jumps that cross or are at the end of a 32-byte boundary. To our advantage, objdump uses different mnemonics for the two possible variants: jmp for the 2-byte instruction, jmpq for the 5-byte one. This allows us to grep for them both with this oneliner:

$ for i in vmlinux-*; do printf "%25s: " "$i"; objdump -wdr "$i" | grep '[13579bdf]\([ef]:.*jmp\>\|[b-f]:.*jmpq\>\)' | wc -l; done

vmlinux-rap: 19872

vmlinux-vanilla: 9770Still around twice as many as on a vanilla kernel.

Implementing the Workaround

To rule out branches crossing a certain byte boundary or branch target alignment being an issue, we followed the guidance of the JCC whitepaper and implemented support for the -mbranches-within-32B-boundaries gas option in the Linux kernel. For good measure, we complemented it with -falign-functions=32 to ensure all branch targets align to at least a 32-byte address. Turns out, it wasn’t as simple as adding these compiler flags, as the kernel has some low-level assumptions about its binary representation, e.g. when patching itself via its asm alternatives mechanism. After some more surgery, however, we had a patch that would handle the cases we’re interested in.

Repeating the above static analysis of the kernel binary looked promising:

$ for i in vmlinux-*; do printf "%25s: " "$i"; objdump -wdr "$i" | grep '[13579bdf]\([ef]:.*jmp\>\|[b-f]:.*jmpq\>\)' | wc -l; done

vmlinux-rap: 19872

vmlinux-rap_align32b: 259

vmlinux-vanilla: 9770

Much better! The remaining unaligned jumps were within assembler source files, like bootstrap, crypto and syscall entry code. But as we have much fewer problematic branches, even less than the vanilla kernel, it was time for new tests. Unfortunately, those tests showed we were still hitting the bug.

From this experiment, we were able to conclusively rule out JCC Erratum and began exploring other possibilities.

Creating a Minimal Reproducer

Our tests so far involved building a Linux kernel and running a supposedly harmless workload on the target hardware that would, eventually, trigger the bug and make the kernel crash. While effective, this method limits the possibilities of doing further analysis, as the system to look into dies along the way. So we looked into other methods of triggering the bug by creating a minimal reproducer.

We already had a vague understanding that RAP’s instrumentation of the code must be somehow related, but failed to see how in particular. Trying to create a userland program, mostly consisting of a chain of RAP calls with varying offsets for the various control flow instructions involved lead nowhere – even after days of exercising this code. Moving it to a kernel module didn’t succeed either. We were even trying to mirror the same memory state and register conditions, wiping the stack with the same byte pattern the target kernel would use, in case it may have any influence on whatever branch logic circuitry exists. But, no dice.

Long story short: we were unable to trigger the bug with our artificial code constructs, extensively exercising what we understood to be the prerequisite. Clearly, it seemed, something else had to have been involved.

Instead of wasting more time on creating a minimal reproducer, we tried a different approach. All we really wanted was to make debugging more feasible by getting rid of bare-metal testing. Fortunately, we were able to trigger the issue when running in a VM, crashing the guest kernel, which accelerated our test cycles significantly. On the other hand, being able to trigger the issue in a VM also meant this might be a security issue if it could affect the host kernel as well. A thought to keep in mind to come back to later.

KVM to the Rescue!

Being able to trigger the bug in a VM allowed much more flexibility in analyzing the issue, like inspecting the VM state after the crash with gdb to look for smoking guns, or monitoring KVM ftrace events around the crash for suspicious VM_EXITs.

We started creating reproducers in the form of slightly differently configured kernels, ending up in different bug flavours. Below is a small selection of analyses of test cases and their visible effects:

Case 1: A Spurious Page Fault

[ 14.015036] PAX: systemd-journal:109, uid/euid: 0/0, attempted to modify kernel code

[ 14.017672] BUG: unable to handle kernel paging request at ffffffff816452cb

[ 14.019962] IP: [<ffffffff8161db2e>] n_tty_write+0x33e/0x7c0

[ 14.021885] PGD ffff888002074ff8 2099067 P4D ffff888002074ff8 2099067 PUD ffff888002099ff0 20a4063 PMD ffff8880020a4058 16000e1

[ 14.025532] Oops: 0003 [#1] SMP

[ 14.026687] Modules linked in:

[ 14.027831] CPU: 2 PID: 109 Comm: systemd-journal Not tainted 4.14.183-grsec+ #45

[ 14.030849] Hardware name: QEMU Standard PC (i440FX + PIIX, 1996), BIOS 1.12.0-1 04/01/2014

[ 14.034739] task: ffff88801eae1400 task.stack: ffffc90000360000

[ 14.037062] RIP: 0010:[<ffffffff8161db2e>] n_tty_write+0x33e/0x7c0

[ 14.039476] RSP: 0018:ffffc90000363c48 EFLAGS: 00010283

[ 14.041613] RAX: ffffffff81645340 RBX: ffff88801c902000 RCX: 0000000000000000

[ 14.044244] RDX: ffff88801eae1400 RSI: 0000000000000006 RDI: ffffc900033572a0

[ 14.046865] RBP: 0000000000000000 R08: 0000000000000000 R09: 00000000000001a1

[ 14.049555] R10: 0720072007200720 R11: 0720072007200720 R12: ee5e04baa08c358e

[ 14.052205] R13: ffff88801c900801 R14: 0000000000000002 R15: ffffc900033572a0

[ 14.054882] FS: 000062a3c763a940(0000) GS:ffff88801fd00000(0000) knlGS:0000000000000000

[ 14.058177] CS: 0010 DS: 0000 ES: 0000 CR0: 0000000080050033

[ 14.060421] CR2: ffffffff816452cb CR3: 0000000002074000 CR4: 00000000000006b0 shadow CR4: 00000000000006b0

[ 14.064939] Stack:

[ 14.066136] 11a1fb45219cafdb ffff88801caca200 ffff88801c9020c0 ffff88801c900800

[ 14.069208] 11a1fb4521ed9ceb ffff88801c902208 0000000000000000 ffff88801eae1400

[ 14.072303] ffffffff8110a100 ffff88801c902210 ffff88801c902210 0000000000000001

[ 14.075406] Call Trace:

[ 14.076712] [<ffffffff8110a100>] ? do_wait_intr_irq+0x120/0x120

[ 14.079074] [<ffffffff8161a965>] tty_write+0x3a5/0x6a0

[ 14.081197] [<ffffffff8161d7f0>] ? process_echoes+0x120/0x120

[ 14.083530] [<ffffffff8127083d>] do_iter_write+0x20d/0x270

[ 14.085758] [<ffffffff812709f5>] vfs_writev+0xe5/0x1a0

[ 14.087867] [<ffffffff81270b2d>] do_writev+0x7d/0x140

[ 14.089993] [<ffffffff81273d05>] sys_writev+0x25/0x60

[ 14.092629] [<ffffffff81273d5d>] rap_sys_writev+0x1d/0x60

[ 14.094862] [<ffffffff81006ffd>] do_syscall_64+0x8d/0x1e0

[ 14.097060] [<ffffffff810012ad>] entry_SYSCALL_64_after_hwframe+0x7b/0x149

[ 14.099714] RIP: 0033:[<000062a3c8435694>] 0x62a3c8435694

[ 14.101892] RSP: 002b:00007260cac76298 EFLAGS: 00000246 ORIG_RAX: 0000000000000014

[ 14.105001] RAX: ffffffffffffffda RBX: 00001c8422e0d530 RCX: 000062a3c8435694

[ 14.107680] RDX: 0000000000000005 RSI: 00007260cac76310 RDI: 0000000000000016

[ 14.110411] RBP: 00007260cac763a0 R08: 00007260cac76210 R09: 0000000000000000

[ 14.113073] R10: 0000000000000000 R11: 0000000000000246 R12: 0000000000000005

[ 14.115767] R13: 0000000000000030 R14: 0000000000000016 R15: 00001c8422e1502a

[ 14.118446] Code: 5d 00 45 85 f6 0f 88 90 02 00 00 49 83 c5 01 48 83 ed 01 0f 85 41 fe ff ff 48 8b 43 18 48 8b 40

48 48 85 c0 74 2c 48 0f ba e8 3f <48> 81 78 f8 6f 48 93 2f 0f 85 4e 04 00 00 48 89 df eb 0f cc cc

[ 14.125914] RIP: [<ffffffff8161db2e>] n_tty_write+0x33e/0x7c0 RSP: ffffc90000363c48

[ 14.129037] CR2: ffffffff816452cb

[ 14.130607] ---[ end trace 08717d19b4d6781f ]---

[ 14.132532] Kernel panic - not syncing: grsec: halting the system due to suspicious kernel crash caused by root

[ 14.136541] Kernel Offset: disabled

[ 14.138094] ---[ end Kernel panic - not syncing: grsec: halting the system due to suspicious kernel crash caused by rootThe CPU signaled a page fault (#PF) with error code 3 (i.e. failed write). Looking at the page table dump, this looks correct on first sight – the PMD entry (PDE in Intel speak) has the present bit set but lacks the write bit. Trying to write to that memory region therefore is expected to raise a page fault. But looking at the faulting instruction “cmpq $0x2f93486f,-0x8(%rax)”, we can see two oddities:

This is a memory read operation for an, apparently, valid mapping, so it should not fail with a write error and

the effective memory address read by that instruction is 0xffffffff81645338, yet CR2 is 0xffffffff816452cb – off by 0x6d bytes.

This is a spurious page fault with a wrong error code and wrong address in CR2 where there should be no page fault at all.

Case 2: Short Jumps

[ 1569.045127] int3: 0000 [#1] SMP

[ 1569.047470] Modules linked in:

[ 1569.047479] CPU: 1 PID: 2037725 Comm: date Not tainted 4.14.183-grsec+ #52

[ 1569.047480] Hardware name: QEMU Standard PC (i440FX + PIIX, 1996), BIOS 1.12.0-1 04/01/2014

[ 1569.047483] task: ffff88801a60e400 task.stack: ffff88801ac50000

[ 1569.047490] RIP: 0010:[<ffffffff81226131>] do_munmap+0x361/0x630

[ 1569.047491] RSP: 0018:ffff88801ac53e30 EFLAGS: 00000246

[ 1569.047493] RAX: ffff88801bc4eee8 RBX: ffff88801bfa4800 RCX: 000000001ac53e90

[ 1569.047494] RDX: ffff88801bc4eed8 RSI: ffff88801bc4ebb8 RDI: ffff88801bfa4800

[ 1569.047495] RBP: ffff88801ac53e90 R08: ffff88801bc4ebb8 R09: 0000000000000000

[ 1569.047496] R10: 0000000000000000 R11: ffff88801bc4ebd8 R12: c7cec31b4fad244a

[ 1569.047497] R13: 0000708f9eff3000 R14: 0000000000000000 R15: ffff88801bc4ebb8

[ 1569.047499] FS: 0000708f9eff2580(0000) GS:ffff88801fc80000(0000) knlGS:0000000000000000

[ 1569.047500] CS: 0010 DS: 0000 ES: 0000 CR0: 0000000080050033

[ 1569.047506] CR2: 0000708f9eff01c8 CR3: 0000000002070000 CR4: 00000000000006b0 shadow CR4: 00000000000006b0

[ 1569.047506] Stack:

[ 1569.047510] 0000000000000000 ffff88801bc4eee8 38313ce4ce8f4b5f ffff88801bfa4808

[ 1569.047519] 0000000000000000 ffff88801bc4eed8 ffff88801bc4ebb8 0000708f9eff9000

[ 1569.047526] 38313ce4ce8f4bff ffff88801ac53eb0 ffff88801bfa4878 ffff88801bfa4800

[ 1569.047533] Call Trace:

[ 1569.047541] [<ffffffff81226f15>] vm_munmap+0x85/0x100

[ 1569.047551] [<ffffffff81226fb5>] sys_munmap+0x25/0x60

[ 1569.113623] [<ffffffff81227015>] rap_sys_munmap+0x25/0x60

[ 1569.113628] [<ffffffff81007076>] do_syscall_64+0x96/0x1d0

[ 1569.113631] [<ffffffff81001215>] entry_SYSCALL_64_after_hwframe+0x63/0xfb

[ 1569.113635] RIP: 0033:[<0000708f9f018c87>] 0x708f9f018c87

[ 1569.113637] RSP: 002b:000078df3e943678 EFLAGS: 00000202 ORIG_RAX: 000000000000000b

[ 1569.113639] RAX: ffffffffffffffda RBX: 00000246eef6bf51 RCX: 0000708f9f018c87

[ 1569.113641] RDX: 0000024600000000 RSI: 00000000000053ae RDI: 0000708f9eff3000

[ 1569.113642] RBP: 000078df3e943870 R08: 0000000000000001 R09: 0000708f9ee396d0

[ 1569.113643] R10: 0000708f9f026f68 R11: 0000000000000202 R12: 0000000000000000

[ 1569.113644] R13: 0000708f9f028190 R14: 0000708f9eff2580 R15: 0000708f9f028190

[ 1569.113646] Code: 48 89 53 48 48 8b 54 24 28 4c 89 e9 4c 89 fe 48 89 df 49 c7 40 10 00 00 00 00 4c 8b 44 24 38 48

83 43 10 01 eb 14 cc cc cc cc cc <cc> cc 48 b8 c2 1b 3f 84 ff ff ff ff cc cc cc e8 9b be ff ff 48

[ 1569.146940] RIP: [<ffffffff81226131>] do_munmap+0x361/0x630 RSP: ffff88801ac53e30A breakpoint trap (#BP) was hit, which is in sync with the in-memory opcode bytes. However, the trapping instruction can never be reached under normal circumstances. It always gets unconditionally jumped over, as it’s part of the RAP ret hash sequence. In fact, all instructions in the range [0xffffffff8122612c,0xffffffff8122613f] are never supposed to be executed – ever!

Below is the full code dump disassembly, marking the trapping instruction and the supposedly wrongly-executed one:

ffffffff81226106: 48 89 53 48 mov %rdx,0x48(%rbx)

ffffffff8122610a: 48 8b 54 24 28 mov 0x28(%rsp),%rdx

ffffffff8122610f: 4c 89 e9 mov %r13,%rcx

ffffffff81226112: 4c 89 fe mov %r15,%rsi

ffffffff81226115: 48 89 df mov %rbx,%rdi

ffffffff81226118: 49 c7 40 10 00 00 00 movq $0x0,0x10(%r8)

ffffffff8122611f: 00

ffffffff81226120: 4c 8b 44 24 38 mov 0x38(%rsp),%r8

ffffffff81226125: 48 83 43 10 01 addq $0x1,0x10(%rbx)

ffffffff8122612a: eb 14 jmp 0xffffffff81226140 ; previous instruction that "jumps short"

ffffffff8122612c: cc int3

ffffffff8122612d: cc int3

ffffffff8122612e: cc int3

ffffffff8122612f: cc int3

ffffffff81226130: cc int3

ffffffff81226131: cc int3 ; trapping instruction

ffffffff81226132: cc int3

ffffffff81226133: 48 b8 c2 1b 3f 84 ff movabs $0xffffffff843f1bc2,%rax

ffffffff8122613a: ff ff ff

ffffffff8122613d: cc int3

ffffffff8122613e: cc int3

ffffffff8122613f: cc int3

ffffffff81226140: e8 9b be ff ff callq 0xffffffff81221fe0 ; expected jump targetThe “jmp 0xffffffff81226140” at address 0xffffffff8122612a jumped 15 bytes short! It should have targeted the call instruction at 0xffffffff81226140 but hit the int3 at 0xffffffff81226131.

Case 3: Wild Deref

[ 1631.263362] BUG: unable to handle kernel paging request at 000000000305d000

[ 1631.286959] RIP: 0010:[<ffffffff81311b30>] load_elf_binary+0x1f50/0x2b10

[ 1631.286961] RSP: 0018:ffff88801a1c7cd0 EFLAGS: 00010206

[ 1631.286963] RAX: 000000000305d000 RBX: ffff88801eb89880 RCX: 0000000000000000

[ 1631.286964] RDX: 0000000000000000 RSI: 0000191e59b06000 RDI: 0000000000000000

[ 1631.286966] RBP: ffff88801a1c7de0 R08: 0000000000000012 R09: ffff88801abac600

[ 1631.286967] R10: ffffffff81311acd R11: ffff88801eb89880 R12: 081c8b407f092d9e

[ 1631.286968] R13: ffff88801ea5e900 R14: 0000000000000003 R15: 000000000305cc20

[ 1631.286970] FS: 0000697476f31580(0000) GS:ffff88801fd80000(0000) knlGS:0000000000000000

[ 1631.286971] CS: 0010 DS: 0000 ES: 0000 CR0: 0000000080050033

[ 1631.286976] CR2: 000000000305d000 CR3: 000000001c847000 CR4: 00000000000006b0 shadow CR4: 00000000000006b0

[ 1631.286977] Stack:

[ 1631.286979] 00007fffffffe000 ffff88801c9d8478 0000201700000002 0000000300000000

[ 1631.286983] 0000191e59aea000 ffff88801ea5e900 0000191e59b04250 00007fff00000000

[ 1631.286987] ffff88801bff3fc0 0000191e59aea000 0000000000000000 0000191e59afc789

[ 1631.286990] Call Trace:

[ 1631.287001] [<ffffffff812817ad>] ? copy_strings+0x35d/0x700

[ 1631.351584] [<ffffffff8127fcd5>] search_binary_handler+0x105/0x2c0

[ 1631.351589] [<ffffffff8128289d>] do_execveat_common+0x9fd/0xdd0

[ 1631.351594] [<ffffffff81290000>] ? path_openat+0x870/0x20f0

[ 1631.351596] [<ffffffff81282cad>] do_execve+0x3d/0x80

[ 1631.351599] [<ffffffff812831fd>] rap_sys_execve+0x4d/0x90

[ 1631.351602] [<ffffffff81007076>] do_syscall_64+0x96/0x1d0

[ 1631.351605] [<ffffffff8100109d>] entry_SYSCALL_64_after_hwframe+0x69/0xfe

[ 1631.351610] RIP: 0033:[<0000697476e37e87>] 0x697476e37e87

[ 1631.351611] RSP: 002b:0000700afc708338 EFLAGS: 00000246 ORIG_RAX: 000000000000003b

[ 1631.351614] RAX: ffffffffffffffda RBX: 00000000ffffffff RCX: 0000697476e37e87

[ 1631.351615] RDX: 0000188007cda4a0 RSI: 0000188007cda490 RDI: 0000188007cda510

[ 1631.351616] RBP: 0000188007cda490 R08: 0000188007cda510 R09: fefefeff64736063

[ 1631.351617] R10: 0000697476f31850 R11: 0000000000000246 R12: 00001880054c647e

[ 1631.351618] R13: 0000188007cda4a0 R14: 0000188007cda4a0 R15: 0000188007cda510

[ 1631.351629] Code: cc cc cc e8 13 de 8e 00 48 8b 74 24 70 31 c9 31 ff 48 8b 84 24 90 00 00 00 41 b8 12 00 00 00 48

05 ff 1f 00 00 48 25 00 f0 ff ff <48> 89 c2 48 89 84 24 90 00 00 00 eb 13 cc cc cc cc cc cc 48 b8

[ 1631.401118] RIP: [<ffffffff81311b30>] load_elf_binary+0x1f50/0x2b10 RSP: ffff88801a1c7cd0

[ 1631.401126] CR2: 000000000305d000The above debug splat is the result of a page fault error, apparently dereferencing RAX, as its value can be found in CR2. However, the faulting instruction is “mov %rax,%rdx” – a register-only operation that isn’t supposed to generate a page fault nor touch memory in any way.

Case 4a: Jumping Wild

The log for test case 4 contained multiple errors. Here’s the first one:

[ 1158.694888] BUG: unable to handle kernel paging request at ffffffff81218c6b

[ 1158.699956] IP: [<ffffffff81218c40>] vm_munmap+0xe0/0x100

[ 1158.699966] PGD ffff88801e9dfff8 20b8067 P4D ffff88801e9dfff8 20b8067 PUD ffff8880020b8ff0 20ba063 PMD ffff8880020ba048 12001e1

[ 1158.706860] Oops: 0003 [#1] SMP

[ 1158.706864] Modules linked in:

[ 1158.706870] CPU: 1 PID: 2326390 Comm: date Not tainted 4.14.183-grsec+ #58

[ 1158.706871] Hardware name: QEMU Standard PC (i440FX + PIIX, 1996), BIOS 1.12.0-1 04/01/2014

[ 1158.706874] task: ffff88801db92800 task.stack: ffff88801aee4000

[ 1158.706880] RIP: 0010:[<ffffffff81218c40>] vm_munmap+0xe0/0x100

[ 1158.706891] RSP: 0018:ffff88801aee7f28 EFLAGS: 00010286

[ 1158.706893] RAX: 000000001aee7f10 RBX: ffffffff81218ce5 RCX: 0000000000000078

[ 1158.706894] RDX: ffffffff81218ce5 RSI: ffffffff81218c05 RDI: ffff88801cdc0478

[ 1158.706895] RBP: 0000000000000000 R08: ffff88801a60d000 R09: 0000000180140013

[ 1158.706896] R10: ffff88801aee7df8 R11: ffff88801ffdc000 R12: 4d6233e2d8938740

[ 1158.706897] R13: ffff88801aee7f48 R14: ffff88801aee7f38 R15: ffffffff81007076

[ 1158.706899] FS: 00006533bdae3580(0000) GS:ffff88801fc80000(0000) knlGS:0000000000000000

[ 1158.706900] CS: 0010 DS: 0000 ES: 0000 CR0: 0000000080050033

[ 1158.706905] CR2: ffffffff81218c6b CR3: 000000001e9df000 CR4: 00000000000006b0 shadow CR4: 00000000000006b0

[ 1158.706906] Stack:

[ 1158.706908] 8000000000000000 0000000000000000 0000000000000000 ffffffff8100109d

[ 1158.706911] 00006533bdb19190 00006533bdae3580 00006533bdb19190 0000000000000000

[ 1158.706914] 0000747e187af1b0 000001aea5e86242 0000000000000206 00006533bdb17f68

[ 1158.706917] Call Trace:

[ 1158.706928] [<ffffffff8100109d>] ? entry_SYSCALL_64_after_hwframe+0x69/0xfe

[ 1158.706930] Code: c2 66 01 c6 75 20 48 83 c4 20 44 89 e8 5b 41 5d 41 5e 41 5f 5d 48 0f ba 2c 24 3f c3 0f 0b 41 bd

fc ff ff ff eb ca 0f b9 10 0f b9 <10> 66 66 2e 0f 1f 84 00 00 00 00 00 0f 1f 40 00 cc cc cc cc cc

[ 1158.706967] RIP: [<ffffffff81218c40>] vm_munmap+0xe0/0x100 RSP: ffff88801aee7f28

[ 1158.706968] CR2: ffffffff81218c6bThe faulting instruction decodes to “adc %ah,0x66(%rsi)” which matches the register state (RSI and CR2 in particular, RSI + 0x66 == 0xffffffff81218c6b == CR2). However, that instruction isn’t part of the intended program. The original instruction stream is as follows:

ffffffff81218c19: 75 20 jne 0xffffffff81218c3b

ffffffff81218c1b: 48 83 c4 20 add $0x20,%rsp

ffffffff81218c1f: 44 89 e8 mov %r13d,%eax

ffffffff81218c22: 5b pop %rbx

ffffffff81218c23: 41 5d pop %r13

ffffffff81218c25: 41 5e pop %r14

ffffffff81218c27: 41 5f pop %r15

ffffffff81218c29: 5d pop %rbp

ffffffff81218c2a: 48 0f ba 2c 24 3f btsq $0x3f,(%rsp)

ffffffff81218c30: c3 retq

ffffffff81218c31: 0f 0b ud2

ffffffff81218c33: 41 bd fc ff ff ff mov $0xfffffffc,%r13d

ffffffff81218c39: eb ca jmp 0xffffffff81218c05

ffffffff81218c3b: 0f b9 10 ud1 (%rax),%edx

ffffffff81218c3e: 0f b9 10 ud1 (%rax),%edx ; fault in the middle of this instruction

ffffffff81218c41: 66 66 2e 0f 1f 84 00 data16 nopw %cs:0x0(%rax,%rax,1)

ffffffff81218c48: 00 00 00 00

ffffffff81218c4c: 0f 1f 40 00 nopl 0x0(%rax)

ffffffff81218c50: cc int3

ffffffff81218c51: cc int3

ffffffff81218c52: cc int3

ffffffff81218c53: cc int3

ffffffff81218c54: cc int3The ud1 instructions above are RAP trap instructions, which are jumped to only if a control flow violation was detected, i.e. on a RAP hash mismatch. Still, we should never observe execution in the middle of one of these instructions. Therefore, the page fault is just a follow-up error of an incorrect control flow redirection.

Case 4b: Jumping Shorter

The second error is as follows:

[ 1190.668570] invalid opcode: 0000 [#2] SMP

[ 1190.670628] Modules linked in:

[ 1190.672289] CPU: 2 PID: 266 Comm: repro.sh Tainted: G D 4.14.183-grsec+ #58

[ 1190.676067] Hardware name: QEMU Standard PC (i440FX + PIIX, 1996), BIOS 1.12.0-1 04/01/2014

[ 1190.679874] task: ffff88801ac62800 task.stack: ffff88801ad44000

[ 1190.683281] RIP: 0010:[<ffffffff8109a941>] copy_process.part.3+0x1b01/0x2250

[ 1190.687283] RSP: 0018:ffff88801ad47db0 EFLAGS: 00010247

[ 1190.689900] RAX: ffff88801afa14b0 RBX: 0000000001200011 RCX: ffff88801afa14d0

[ 1190.693275] RDX: ffff88801afa14d8 RSI: ffff88801afa1bb8 RDI: ffff88801bf03c01

[ 1190.696672] RBP: ffff88801ad47e78 R08: ffff88801afa1bb8 R09: ffff88801e59cc38

[ 1190.699743] R10: 000000000000001d R11: ffffffff810f554d R12: da40c9769facba00

[ 1190.702949] R13: ffff88801bf03c00 R14: ffff88801a590a00 R15: ffff88801a590eb8

[ 1190.706319] FS: 00007b0744c18580(0000) GS:ffff88801fd00000(0000) knlGS:0000000000000000

[ 1190.710374] CS: 0010 DS: 0000 ES: 0000 CR0: 0000000080050033

[ 1190.713611] CR2: 00007b0744c18500 CR3: 000000001becf000 CR4: 00000000000006b0 shadow CR4: 00000000000006b0

[ 1190.718242] Stack:

[ 1190.719605] ffff88801afa1bb8 ffff88801afa1bb8 ffff88801ac62800 ffff88801afa14d0

[ 1190.723377] ffff88801afa14d8 ffff88801afa1bc8 ffff88801bf03c78 ffff88801bf01878

[ 1190.727204] ffff88801e59cc48 ffff88801afa14b0 ffff88801bf01800 0000000000000000

[ 1190.730939] Call Trace:

[ 1190.732508] [<ffffffff8109b3b5>] _do_fork+0xf5/0x520

[ 1190.735010] [<ffffffff8109babd>] rap_sys_clone+0x2d/0x70

[ 1190.737696] [<ffffffff81007076>] do_syscall_64+0x96/0x1d0

[ 1190.740635] [<ffffffff8100109d>] entry_SYSCALL_64_after_hwframe+0x69/0xfe

[ 1190.745029] RIP: 0033:[<00007b0744b1ec40>] 0x7b0744b1ec40

[ 1190.747986] RSP: 002b:00007be6e658d110 EFLAGS: 00000246 ORIG_RAX: 0000000000000038

[ 1190.752235] RAX: ffffffffffffffda RBX: 0000000000000000 RCX: 00007b0744b1ec40

[ 1190.755931] RDX: 0000000000000000 RSI: 0000000000000000 RDI: 0000000001200011

[ 1190.759548] RBP: 0000000000000000 R08: 0000000000000000 R09: 00007b0744c18580

[ 1190.763200] R10: 00007b0744c18850 R11: 0000000000000246 R12: 0000000000000000

[ 1190.766814] R13: 000010e1c5bc6c40 R14: 000010e1c5dd1e70 R15: 00007be6e658d1d0

[ 1190.770507] Code: c6 4c 89 ef 48 8b 54 24 20 4c 89 00 49 8d 40 10 48 89 44 24 28 48 8b 44 24 48 4c 89 44 24 48 49

89 40 18 eb 0d 48 b8 3b 32 95 aa <ff> ff ff ff cc cc cc e8 d3 9c 17 00 4c 8b 44 24 48 41 83 45 70

[ 1190.780577] RIP: [<ffffffff8109a941>] copy_process.part.3+0x1b01/0x2250 RSP: ffff88801ad47db0An invalid opcode exception (#UD) triggered by an actual invalid instruction. However, again, the targeted instruction points into the middle of an unintended one. Once again, the instruction is part of the unexecuted RAP ret hash, as we already observed in case 2.

This is the original instruction stream:

ffffffff8109a917: 4c 89 ef mov %r13,%rdi

ffffffff8109a91a: 48 8b 54 24 20 mov 0x20(%rsp),%rdx

ffffffff8109a91f: 4c 89 00 mov %r8,(%rax)

ffffffff8109a922: 49 8d 40 10 lea 0x10(%r8),%rax

ffffffff8109a926: 48 89 44 24 28 mov %rax,0x28(%rsp)

ffffffff8109a92b: 48 8b 44 24 48 mov 0x48(%rsp),%rax

ffffffff8109a930: 4c 89 44 24 48 mov %r8,0x48(%rsp)

ffffffff8109a935: 49 89 40 18 mov %rax,0x18(%r8)

ffffffff8109a939: eb 0d jmp 0xffffffff8109a948 ; jumping over the faulting instruction

ffffffff8109a93b: 48 b8 3b 32 95 aa ff movabs $0xffffffffaa95323b,%rax ; fault at the last instruction byte

ffffffff8109a942: ff ff ff

ffffffff8109a945: cc int3

ffffffff8109a946: cc int3

ffffffff8109a947: cc int3

ffffffff8109a948: e8 d3 9c 17 00 callq 0xffffffff81214620

ffffffff8109a94d: 4c 8b 44 24 48 mov 0x48(%rsp),%r8This makes the error look similar to case 2 – again a “short jump”. The jmp instruction at address 0xffffffff8109a939 is jumping 7 bytes short and should have ended on the CALL instruction at address 0xffffffff8109a948.

Case 5: Jumping Short, take 3

Our last one is this:

[71010.980469] int3: 0000 [#1] SMP

[71010.982047] Modules linked in:

[71010.982057] CPU: 1 PID: 1313755 Comm: date Not tainted 4.14.183-grsec+ #82

[71010.982059] Hardware name: QEMU Standard PC (i440FX + PIIX, 1996), BIOS 1.12.0-1 04/01/2014

[71010.982061] task: ffff888015c31400 task.stack: ffff88801a2c4000

[71010.982070] RIP: 0010:[<ffffffff81202a01>] unlink_file_vma+0xc1/0xd0

[71010.982071] RSP: 0018:ffff88801a2c7d68 EFLAGS: 00000292

[71010.982073] RAX: ffffffff811f6b7d RBX: 4d5bbf8c5888a5fd RCX: 0000000000000000

[71010.982074] RDX: ffffffff00000001 RSI: ffffffff812029cd RDI: ffff88801e4c4bb0

[71010.982075] RBP: 00007f9d8e46e000 R08: ffff88801d8bb9b8 R09: 0000000000000006

[71010.982076] R10: ffff88801a2c7cd0 R11: ffff88801ffdc000 R12: b2a44073d9a88478

[71010.982077] R13: 00007f9d8e46e000 R14: ffff88801a2c7da0 R15: 00007f9d8e468000

[71010.982079] FS: 00007f9d8e467580(0000) GS:ffff88801fc80000(0000) knlGS:0000000000000000

[71010.982080] CS: 0010 DS: 0000 ES: 0000 CR0: 0000000080050033

[71010.982086] CR2: 00007f9d8e4651c8 CR3: 000000001a895000 CR4: 00000000000006b0 shadow CR4: 00000000000006b0

[71010.982087] Stack:

[71010.982089] ffff88801a165000 ffff88801db3f000 ffff88801a2c7e40 ffffffff81202185

[71010.982092] 4d5bbf8c5888cb9d ffffffff812029cd ffff88801a165708 ffff88801db3f000

[71010.982095] 00007f9d8e468000 00007f9d8e46e000 00007f9d8e497000 ffff88801a2c7dc8

[71010.982097] Call Trace:

[71010.982106] [<ffffffff81202185>] ? unmap_region+0x135/0x1c0

[71010.982109] [<ffffffff812029cd>] ? unlink_file_vma+0x8d/0xd0

[71010.982112] [<ffffffff81204fe5>] ? do_munmap+0x385/0x630

[71010.982115] [<ffffffff81205315>] ? vm_munmap+0x85/0x100

[71010.982118] [<ffffffff81205415>] ? rap_sys_munmap+0x25/0x60

[71010.982122] [<ffffffff81007076>] ? do_syscall_64+0x96/0x1d0

[71010.982125] [<ffffffff8100109d>] ? entry_SYSCALL_64_after_hwframe+0x69/0xfe

[71010.982128] Code: f0 75 1f 48 81 78 f0 8f 61 90 89 75 18 48 83 c4 08 5b 41 5d 41 5e 41 5f 5d 48 0f ba 2c 24 3f c3

0f 0b 0f b9 00 0f b9 00 66 90 cc <cc> cc cc cc cc 48 b8 c5 cd 6a 55 00 00 00 00 55 49 89 f9 48 89

[71011.060543] RIP: [<ffffffff81202a01>] unlink_file_vma+0xc1/0xd0 RSP: ffff88801a2c7d68It’s a breakpoint trap (#BP), again in sync with the in-memory instructions. However, also again, it’s the RAP hash sequence that was attempted to be executed. But this time it’s a RAP call hash, an instruction sequence that isn’t meant to be executed either and which no branch instruction would intentionally target.

Here is the disassembly of the instruction stream:

ffffffff812029d9: 48 81 78 f0 8f 61 90 cmpq $0xffffffff8990618f,-0x10(%rax) ; RAP return hash check

ffffffff812029e0: 89

ffffffff812029e1: 75 18 jne 0xffffffff812029fb

ffffffff812029e3: 48 83 c4 08 add $0x8,%rsp

ffffffff812029e7: 5b pop %rbx

ffffffff812029e8: 41 5d pop %r13

ffffffff812029ea: 41 5e pop %r14

ffffffff812029ec: 41 5f pop %r15

ffffffff812029ee: 5d pop %rbp

ffffffff812029ef: 48 0f ba 2c 24 3f btsq $0x3f,(%rsp)

ffffffff812029f5: c3 retq ; end of unlink_file_vma()

ffffffff812029f6: 0f 0b ud2 ; speculation trap

ffffffff812029f8: 0f b9 00 ud1 (%rax),%eax ; RAP hash check trap #1

ffffffff812029fb: 0f b9 00 ud1 (%rax),%eax ; RAP hash check trap #2

ffffffff812029fe: 66 90 xchg %ax,%ax ; inter-function alignment padding

ffffffff81202a00: cc int3 ; start of RAP call hash sequence

ffffffff81202a01: cc int3 ; trapping instruction

ffffffff81202a02: cc int3

ffffffff81202a03: cc int3

ffffffff81202a04: cc int3

ffffffff81202a05: cc int3

ffffffff81202a06: 48 b8 c5 cd 6a 55 00 movabs $0x556acdc5,%rax

ffffffff81202a0d: 00 00 00

ffffffff81202a10: 55 push %rbp ; start of next function

ffffffff81202a11: 49 89 f9 mov %rdi,%r9It’s completely unclear how the control flow could have ended up here. It’s, as already mentioned, the void between two function symbols.

Facts Gathered so Far

All crashes have in common that the CPU’s register state cannot explain the observed behaviour. Either the instruction pointer is out-of-sync with the in-memory instruction stream (cases 2 and 4) or the generated exception cannot be raised by the faulting instruction (e.g. case 3 where a register-only instruction generates a #PF with error code 3).

None of the test cases fail on the first execution attempt. However, they are reproducible. Sometimes they trigger merely in seconds, sometimes within minutes, in rare cases it takes a few hours.

Strangely enough, this VM only triggers the crashes when running on a very specific Atom CPU based system: an Intel Atom x7-E3950, stepping 10. Stepping 9 of this very CPU is running just fine.

Running the same setup (same VM, same kernel, same QEMU commandline, which includes -cpu kvm64, eliminating any host CPU specific feature differences to interfere) on other Intel-based systems (one i9-9900, one Cascade Lake ESXi-based system) failed to reproduce the issue as well – they ran flawlessly for days.

We also know that it can’t be a stale TLB entry or the like, as all crashes happened in core kernel code that uses a rather static mapping that won’t be modified at runtime.

While we tested a large number of kernel configurations and combinations of instrumentation, we weren't able to determine a definite root cause of instrumentation that exacerbates the issue. That said, we did manage to only reproduce the issue with all three RAP modes enabled in combination: the deterministic forward and backward-edge checks and the probabilistic XOR instrumentation. From the above analysis, we also know that aligning branch targets did not resolve the issue -- this aspect will be important later.

A Microcode Regression?

While continuing our search for an explanation to the observed behaviour, we started looking through the errata list once again. As most familiar with these errata lists are aware, the lists are disappointingly and seemingly increasingly vague and sparse on the conditions necessary to trigger a certain bug. So this was a dead end, as none of the erratas stood out nor would apply to stepping 10 only.

Looking further, we ran across an article from RedHat that was mentioning certain microcode updates could lead to kernel crashes. Although it was mentioning Xeon v3 CPUs only, the fault behaviour matched exactly what we observed: a register-only instruction triggering a page fault. RedHat suggested using the latest microcode version available. Here, we are using the latest one available, so to investigate whether this latest one introduced a regession, we tried older microcode versions.

Microcode Downgrading

To be able to test old microcode, we needed to modify the microcode updater in the Linux kernel to accept lower revisions than the currently loaded one. The Intel SDM isn’t specific about if loading a microcode update with a lower revision number than currently loaded simply shouldn’t be done or if it’s not supported at all, i.e. if the CPU would reject such an “update”. The sketched procedure suggests to skip microcode updates with a lower version, but doesn’t mention if attempting to load such a microcode update would actually fail.

It turns out that Intel CPUs do support microcode downgrading.

If you want to play with this yourself, this patch allows loading a microcode update file with a lower revision than loaded by the BIOS during early boot. The microcode “update” needs to be provided as an initrd cpio archive as sketched here. As a safety guard, it requires the mentioning of ucdown on the kernel commandline to be able to easily recover from failing downgrade attempts.

Tests with microcode revision 0x10 – the earliest we could find for this stepping – also triggered the bug, making us conclude it wasn’t a regression introduced in a recent microcode update.

Microcode Upgrading

After contacting the customer with our results gathered so far, we were able to test an – at the time – unpublished update, revision 0x1e. It later became part of the microcode update pack that fixed the RAPL information leak, known as INTEL-SA-00389. Apollo Lake CPUs aren’t listed as affected, yet Intel must have had a reason to create new microcode updates for this platform, perhaps fixing yet another undisclosed issue. Regardless, our testing confirmed that this microcode update didn’t fix the bug.

Half a year later, another microcode update was released to fix the information leak known as INTEL-SA-00465. We tested that one as well, not expecting any improvement, and confirmed the CPU misbehavior still triggered as before.

Onboarding Intel

By now it was clear that this issue wasn’t a software bug, as we ruled out all alternatives. It was about time to inform the vendor, and that’s what we did by the end of September 2020. We got a fast response, but “corporate discrepancies” took their toll and after some back and forth our initial attempts to setup a work environment unfortunately failed. That took the wind out of our sails and the matter got slowly forgotten about for more urgent tasks.

Half a year later, the issue got some traction again and with the support of our customer’s existing communication channels, we were able to provide Intel our VM test setup for reproducing the issue. To Intel's credit, within a month they were able to confirm the issue and provide a debug microcode patch for testing as a potential fix. After hours of intensive testing on our side, we were able to confirm that it, indeed, fixes the issue!

However, a debug microcode update isn’t all that valuable, as it needs support by the firmware to enable the CPU’s debug mode, either by having a dedicated BIOS setup option (which our setup lacked) or a binary modification of the UEFI firmware like we attempted via sdbg_enable_patch.txt. This obviously isn't a production-grade solution: a non-debug microcode update was needed, which Intel provided a month later.

Case closed? Well, not really. While we are happy that Intel found a way to fix the bug on their side (and thankful to the people at Intel who helped in creating the fix), there are many systems out there running the old microcode which would still be subject to spurious crashes. If a deeper analysis showed it was possible, we were still strongly interested in providing a workaround in software by changing the instrumentation performed in grsecurity to please the CPU and avoid triggering the bug. Unfortunately, Intel was silent on the matter, so we had no choice but to take a rather extreme approach.

Reversing the Fix

With the help of the impressive preliminary work of Positive Technologies, we were able to determine that the only difference in the debug microcode update was this additional CRBUS (Control Register Bus) register write:

The fix is, apparently, to toggle a bit in an internal register. As there’s no public documentation about these, our best guess is that it is related to cache control, disabling some optimization, that otherwise leads to the bug behaviour. This also means our hopes are dashed of finding a clue about a potential software workaround, and we won’t be able to provide one – at least not as of now.

Still interested in the overall impact, we started looking further into other microcode updates. We noticed this register was already written in all previous microcode updates applicable to this CPU model we could get our hands on, i.e. all updates for stepping 9 and also for stepping 2 (which isn’t a documented Apollo Lake CPU stepping, so might be a Broxton relic?).

minipli@bell:~/atom_bug/ucode$ for i in 06-5c-0*; do echo $i:; update_parser.py --show $i | grep 0x0000063b; done

06-5c-02-0x14:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000004000000

06-5c-09-0x36:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000004000000

06-5c-09-0x38:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000004000000

06-5c-09-0x40:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000004000000

06-5c-09-0x44:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000004000000

06-5c-0a-0x10:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

06-5c-0a-0x16:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

06-5c-0a-0x1e:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

06-5c-0a-0x20:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

06-5c-0a-0x20-dbg:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000004000000

06-5c-0a-0x22:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000004000000The above files are named “<family>-<model>-<stepping>-0x<microcode revision>”.

Please note the lack of the second write to address 0x63b for microcode updates for stepping 10.

The last two files are microcode updates containing the fix and not triggering the bug any more.

We also believe the Denverton family of CPUs may be affected, as all microcode updates for 06-5f-01 up to the current revision 0x34 also lack the second CRBUS register write.

minipli@bell:~/atom_bug/ucode$ for i in 06-5f-*; do echo $i:; update_parser.py --show $i | grep 0x0000063b; done

06-5f-01-0x24:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

06-5f-01-0x2e:

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000

06-5f-01-0x34:

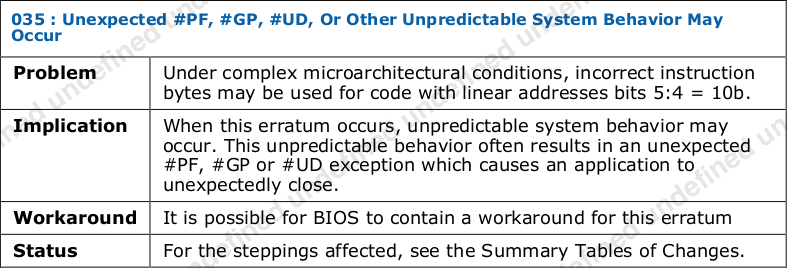

address: 0x0000063b, mask: 0xffffffffffffffff, value: 0x0000000080000000Unfortunately, we’re not able to analyze microcode updates for Goldmont Plus at this point. However, Erratum GLK-035 seems to hint that this bug was inherited from Apollo Lake up to Gemini Lake (Goldmont Plus) and only fixed there recently:

Information Request: If you’re able to decrypt Gemini Lake microcode update files, please check if there was an additional CRBUS register write added like shown above and drop us a note. It must have happened after revision 0x28, but no later than revision 0x36. — Confirmed!

The above errata mentioning "code with linear addresses bits 5:4 = 10b" gives a hint to the relevance of our RAP-enabled workloads and why our attempts at performing more branch alignment didn't work around the CPU's error case: such addresses can include the 32-byte aligned branch targets that would be more likely to appear under RAP-enabled kernels. This also suggests the reach of the problem is much wider than our particular instrumentation, as we'll talk about next.

Taking Action

So was this just an oversight of Intel when creating microcode updates? Or a supposed-to-be-fixed bug in stepping 10 that is, in fact, not fixed? Who knows. All we know is that we won’t be able to ship a software workaround, so we instead added this big warning when we detect running on a broken system:

[ 0.000000] ***************************************************************

[ 0.000000] ** WARNING WARNING WARNING WARNING WARNING WARNING WARNING **

[ 0.000000] ** **

[ 0.000000] ** Atom branch bug detected, expect spurious kernel crashes! **

[ 0.000000] ** BIOS microcode update is *required*, tainting kernel. **

[ 0.000000] ** **

[ 0.000000] ** WARNING WARNING WARNING WARNING WARNING WARNING WARNING **

[ 0.000000] ***************************************************************The official fix will likely ship in microcode update revision 0x22 or a later release.

As the microcode update is already five months old and the official microcode update source still doesn’t list it, we thought it might help others still running into similar issues by providing it ourselves: ucode_506ca_0x22.zip. Intel, fortunately, provided it with a license allowing redistribution.

While we were initially unable to reproduce the issue on a vanilla kernel, others seem to have run into this issue by simply running userland workloads like compiling Haskell sources. More recently, a new member of our team was also able to trigger the issue in userland by compiling QEMU. So even if we were able to work around the issue for the kernel itself, it's clear now that the bug could present itself (in obvious crashing ways or more subtle corruptions) via certain userland code without special instrumentation as well. This leaves us with only one option for remediation advice: patch your CPUs!

FWIW, we also saw the same CPU behavior on the slightly older N4200 processor, released in Q3 2016. This isn’t especially surprising, given it’s based on Apollo Lake as well and even shares the same CPUID with the x7-E3950 processor.

The Security Angle

We already hinted in the beginning that this issue might have security implications as we were able to trigger the bug from within a VM. If a malicious VM can prepare the CPU state such that the actual bug would happen in the host kernel, this would be, at least, a DoS issue. But as we cannot pinpoint the concrete “complex microarchitectural conditions” needed, we can only advise our readers, again, to apply the microcode update.

Conclusions

Hardware has bugs, too

It was hard for me to accept that such a severe hardware bug could exist when running fairly routine code sequences. I tried very hard to find a software bug, but failed to find one that would explain the observed behaviour. It took a lot of time to convince myself that this was, in fact, a CPU bug. But, to quote a wise man: “When you have eliminated all which is impossible, then whatever remains, however improbable, must be the truth.” – Arthur Conan Doyle, “The Adventure of the Blanched Soldier”

Intel needs better QA

Apparently, the underlying problem was known before, as microcode updates for steppings 2 and 9 prove. However, that knowledge got lost for stepping 10, suggesting this is a QA fail, either as in not thoroughly enough verifying the bug was, indeed, (not) fixed by the new stepping or, even worse, by simply forgetting to include the fix already present in earlier releases.

Trust your skills

Blaming the compiler or even the hardware isn’t always wrong. With the increasing complexity of these systems/devices (and the older one gets), it simply becomes more and more likely that one runs into serious issues like that covered here.

Update (4/21/2022)

Intel recently released official microcode updates on their GitHub repository. The 0x22 revision above wasn't published and a subsequent 0x24 revision released in February reintroduced the bug discussed above. We have confirmed however that the just released "microcode-20220419" containing microcode revision 0x28 does finally include the fix for the issue. Make sure to upgrade to that version to get this bug fixed for good!